Mutant examiners

"I am afraid your grades were almost derailed by a mutant algorithm” – Boris Johnson

There are lots of reasons the UK A-level fiasco (and subsequent government U-turn) should never have happened.

Maybe there shouldn’t have been a requirement to sign a ridiculously broad confidentiality agreement if you wanted to be involved in the review process, which meant that there was not proper scrutiny by outside experts who could have caught the obvious howlers*.

Maybe the fact that the methodology benefitted exam centres with smaller student/subject cohorts - who in turn are disproportionately private schools - should have given a Conservative government pause for thought over the perception they were playing favourites.

Maybe if anyone had really thought through the politics of university admissions based on an estimate - rather than an assessment - of merit, it never would have been attempted. Imagine if the Premier League trying to settle cancelled matches with a statistical model, or the IOC running the Olympics on a spreadsheet. There’s enough disappointment and tragedy built into the process when it works well let alone when the outcome is outside the control of the participants.

Finally, just before A-level results were published, Scotland went through the same crisis, with the same eventual outcome. A quick glance across the border could have prevented a huge amount of distress for pupils and embarrassment for the government.

However, none of those things happened and a critical mass of people in the right jobs managed to convince themselves that an algorithm that was at best around 50-70% accurate at assigning the correct grade was somehow going to be good enough. There is a jaw-dropping section of the Ofqual technical paper that explains why anyone in their right minds could have thought this was acceptable:

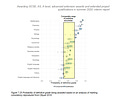

To provide context for these measures [of predicted accuracy], it is important that they are considered against analogous measures from a typical year. For the purposes of comparison, measures of qualification-level classification accuracy from a recent Ofqual study examining marking consistency [my emphasis] are presented in Figure 7.25. These metrics are based on the probability of examiners awarding marks to students’ responses that result in the same grade being awarded as if the marking had been performed by the senior examiner. These probabilities of receiving the ‘definitive’ grade, therefore, provide a meaningful basis for comparison with the probabilities of an exact grade match included in the accuracy measures reported here.

As the graph shows, outside of Maths and Science, the predictive accuracy of an actual examiner marking the exact same paper as a senior examiner is <70%, and in some cases a lot lower. The argument for the algorithm being a good method is just: “Yeah sure, this algorithm is bit rubbish. But you know what is also a bit rubbish? Actual examiners”.

How is the inconsistency of examinations not a national scandal?

“Commenting on the research, Ofqual chief regulator Sally Collier said: “Our latest research confirms that the quality of marking of GCSEs, AS and A levels in England is good, and compares favourably with other examination systems internationally.”

OK, so how is the inconsistency of examinations not a global scandal? And it’s not just school, University grades are also horrifically inconsistent and it might even be much worse than school. Which does make me feel better about the fact I got my worst finals mark in the subject that my professors believed I was strongest, and at one point planned to do a PhD in. I’m not bitter or anything.

So what to make of all this then? I don’t have the answer, but my personal view is that multiple choice testing is underrated. While also having significant drawbacks, at least they are far more consistent and efficient to administer**. Thoughtful, human marking has its place in the educational toolkit, and some subjects are much harder to assess on multiple choice than others. But where assessment has implications for your life chances, I’d err towards prioritising consistency.

*My personal favourite howler is Ofqual screwed up the backtest “demonstrating” the predictive accuracy of the algorithm. To briefly summarise: the predictions were a function of an estimated grade curve at a school/subject level based on history, combined with teacher rankings of this years cohort to assign each point on the estimated grade curve to each student. Problem was, when they did the 2019 accuracy backtest they used the actual marks for the rank, which is equivalent to assuming teachers can rank order their pupils with 100% accuracy. Oops.

**We shouldn’t underestimate the fact that multiple choice tests are vastly cheaper and faster. That’s money and time that can be spent on better things!

What I’m reading at the moment

The Goblin Emperor by Katherine Addison. Back when Game of Thrones first came out I remember people describing it as “fantasy, but with politics!” however this is actually fantasy, but with politics.

Works in Progress -some of my friends have set up a new online periodical. I think it’s great. Go check it out!